The Solution to Newcomb's Paradox

I wrote about this on Facebook in 2018. Here is a restatement of Newcomb's paradox and my view of it.

Hypothetical scenario: Scientists have invented a machine for predicting human decisions. The machine scans a person’s brain, then scientists input a precise description of a possible situation. The machine does some incredibly complicated calculations, then predicts what the subject would do in the given situation. The machine has been found to be 90% accurate: i.e., if a person is put in the given situation, 90% of the time the person does what the machine predicted.

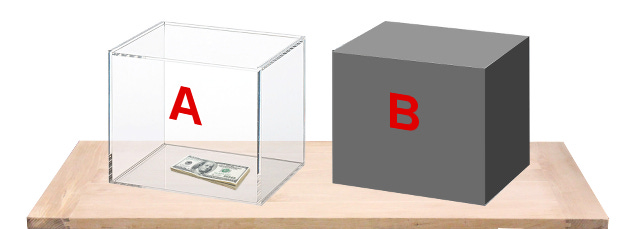

Your brain was scanned yesterday, and scientists entered a description of the choice situation you are about to face, namely: you are shown two boxes, box A and box B. You may take either box B, or both boxes. Box A contains $1000. Box B contains either $1 million or nothing. If the machine predicted that you would take both boxes, then the scientists put nothing in box B. If it predicted that you would take only B, then they put $1 million in B. What should you choose?

First answer: You should maximize your expected payout. (For simplicity, assume utility increases linearly with money.) If you take only B, it is 90% likely that the machine will have accurately predicted that, and you’ll get $1 million. If you take both boxes, it is 90% likely that the machine will have correctly predicted that, so you’ll get only $1000. The expected profit calculation is:

Take 1 box: (0.9)(1,000,000) + (0.1)(0) = 900,000

Take 2 boxes: (0.9)(1,000) + (0.1)(1,001,000) = 101,000

So take 1 box.

Second answer: Box B contains either $0 or $1,000,000, and that cannot be changed, since that was decided yesterday when the machine made its prediction. If it contains $0, then you should take both boxes, to get $1000 instead of $0. If it contains $1,000,000, then you should again take both boxes, to get $1,001,000 instead of $1,000,000. So take both boxes.

Problem: There seems to be a conflict between two principles of rational choice:

Expected Utility Maximization (EUM): Make the choice that maximizes your expected utility.

Dominance: If there is a dominant choice (viz., one that renders you better off under each possible assumption about the state of the world independent of you), take that choice.

Note: Most people who comment on this problem choose either 1 box (the so-called “one-boxers”) or 2 boxes (the “two-boxers”), and then simply reiterate the reasoning for that position as given above. This does not solve the paradox, since it does not remove the appearance of a conflict between two obvious principles of rational choice. To solve the paradox, one must either explain independently why one of the above two decision principles is mistaken, or explain why one of them does not really support the choice that it is said to support.

* * *

Solution: Two boxing is correct, following the dominance reasoning. The argument for one box is a misuse of the principle of EUM. EUM is a formalization of the intuitive principle that an agent has a reason to do A insofar as A makes it more likely that the agent’s goals will be satisfied. The 1-box argument misunderstands this by interpreting “makes more likely” as “increases the evidential probability relative to the agent’s information”. In fact, having a goal does not (intrinsically) give you a reason to give yourself evidence that the goal will be satisfied; it gives you a reason to cause the goal to be satisfied.

Since you cannot change the past, the correct EU calculation is to treat the past as fixed, and calculate EU given each possible past state of the world. Then take an average of these EU values, weighted by your credence in each possible past state. This way of doing the calculation necessarily preserves the results of dominance reasoning. In this case, the calculation is:

Take 1 box: (c)(1,000,000) + (1 - c)(0) = 1,000,000c

Take 2 boxes: (c)(1,001,000) + (1 - c)(1,000) = 1,000,000c + 1000

where c is your credence that the machine predicted that you would take 1 box. Notice that whatever c is, the expected payoff of 2 boxes higher than that of 1 box, by $1000. So you should take 2 boxes.

Btw, on realizing this, you may want to raise your value of c. This won’t make a difference. Just plug the new, higher value of c into the above formulae, and the EU of 2 boxes is still higher.