The Case for Tyranny

What is the strongest argument against libertarianism? Occasionally, someone asks that. Until recently, I didn’t think there were any very strong ones.

But today I think there is at least one important argument against liberty that is hard to answer. This isn’t exactly an argument “for tyranny” (as my title colorfully puts it — I have to attract clicks, you know) — there’s no interesting argument for having the government send people to concentration camps, or prohibit people from criticizing the government, or prohibit private property, etc. But it’s an argument for a pretty intrusive state. I’m going to explain it here to see if any readers can identify a good response to it.

I. You Should Care About Existential Risks

Assume that intelligent life is extremely valuable. If the human species continues for a long time, a large number of future people will exist, with valuable lives. Due to recent and expected future population growth, there will be many more future people than the people who have existed up till now.

However, there is a chance that something will extinguish the human species in the near future. Of course, something will eventually extinguish the human species — that’s guaranteed. But it could happen either soon or in the distant future. If it happens soon, that will cut off all the enormous future value that the human species could otherwise realize. There is a good chance that then no more intelligent life would ever exist on Earth.

This means that actions or events that slightly increase the chances of the species being extinguished soon have very large expected costs. For example, if the species were to last for another million years, it’s plausible that that would mean 100 trillion future lives.* Now, suppose some decision made by us today has a 1 in a million chance of extinguishing the human species in this century. That is the sort of risk that we would just ignore. If someone was worrying about a 1-in-a-million risk, we would laugh at that person.** But that risk would have an expected cost of 100 million lives.

*Calculation: say the species lasts for 1 million years, with an average population of 8 billion (close to the current population; it’s not clear whether future population will be higher or lower than this), and say that an average life is 80 years. Then we get: (8,000,000,000 population)(1,000,000 years)/(80 years per life) = 100,000,000,000,000 lives. Obviously, these numbers are guesses. The point is just that the future has very large expected value, if the species doesn’t destroy itself soon.

**Aside: this is how people react now to the threat of nuclear war. “Oh, don’t be silly, nobody would start a nuclear war. We’ll never have a leader crazy enough to want a nuclear war.” And “Don’t worry, a nuclear war probably wouldn’t kill everyone.” So we don’t care about the fact that the U.S. President has control of 4,000 nuclear weapons and the legal authority to launch a nuclear first strike at any time, for any reason. When I brought this up once on Facebook, people laughed it off. We’re not close to a nuclear war now!

This is how our species is going to die. Not necessarily from nuclear war specifically, but from ignoring existential risks that don’t appear imminent? at this moment. If we keep doing that, eventually, something is going to kill us – something that looked improbable in advance, but that, by the time it looks imminent, is too late to stop.

II. Freedom + Technology = Existential Risk

A. Freedom & crime

Until now in human history, political freedom has been an almost unmitigated good. The freer a society has been, the better off it has been in almost all ways.

But not all ways. Freer societies, for one thing, have more crime. The Soviet-bloc, communist countries had very low crime rates, for obvious reasons. Everyone was afraid of the state, which was constantly spying on the people. They didn’t have rights to privacy of the sort we are supposed to have in the U.S. So the state could effectively suppress crime. But this advantage was vastly outweighed by the general poverty and oppression of those societies.

But, that’s only because, up till now, individual criminals couldn’t do that much damage. Sometimes, a crazy person kills multiple people. In extreme cases (terrorists), maybe hundreds. In one extreme case, thousands. Until now, in order to kill more than that many people, you had to be a government.

B. The nature of technology

That’s going to change. I don’t know what future technologies we’re going to have. But there are three very broad features of technologies that we should expect to continue: More advanced technologies are (1) more potent, (2) more efficient, and (3) pervertable.

Meaning: As technology advances, it becomes possible for the person or group using it to produce larger effects, with smaller costs, where costs can be measured in money, in time, in effort and expertise. This makes it possible to do more good, if indeed one is trying to do good. But the power to do good is also the power to do evil, and the power to do a lot of good is also the power to do a lot of evil.

Example: When human beings invented jet airplanes, this enabled us to transport large groups of people a long distance, in a short time, with less expense than earlier transportation methods. To do this, one has to harness large amounts of energy – there will be a large amount of stored chemical energy (fuel), which will be converted into kinetic energy during flight. But as an inevitable side effect, the technology can also cause enormous harm, if that energy is directed in a destructive direction (as in the 9/11 terrorist attack).

Virtually every technology that can do something great can also do something horrible, depending on the intentions of the person using it. The more potent it is for the one purpose, the more potent it will usually be for the other. If a knife can cut broccoli easily, it can also cut human flesh. If an AI system can be used to figure out how to save lives, the same AI can be used to figure out how to destroy lives. If genetic engineering enables us to create smarter, healthier, happier people, the same technology will enable us to create more deadly, difficult-to-kill diseases.

As I say, features (1) – (3) are true of almost every technological advance. Now project that into the future. Assume that technology will continue to advance for a very long way, and that the advances will continue to have these three features that virtually all advances so far have had. What’s the logical extension?

The logical extension is that it becomes possible for a single person, cheaply and easily, to destroy everything.

Of course, I don’t know how exactly, since I don’t now possess the technology that people will have in the future. But that, logically, is where we have to end up, if technology continues to become more potent, more efficient, and remains pervertable.

As an example, perhaps we will develop efficient devices for genetic engineering. A very advanced device would be affordable by ordinary individuals, and would enable a non-expert to genetically modify an organism to have the properties desired by the user. This could be used, say, to create a maximally cute, long-lived, well-behaved kitten . . . or a virus with the transmissibility of Covid-19 and the deadliness of AIDS.

C. Don’t trust people

Once that day arrives, we’re done. If a technology is invented that enables any individual to destroy the species, the next day, the species will be destroyed.

This isn’t a particularly pessimistic view about human nature -- this prediction does not depend on thinking “humans are inherently evil”, or “humans are suicidal”. It depends on the well-known fact that humans are diverse. If there are 8 billion people, someone is going to have some crazy ideas. There’s going to be at least one person who is going to somehow think that destroying everything would be a good idea. (See https://fakenous.net/?p=1302, https://fakenous.net/?p=1333.) There are assuredly some such people now – they just haven’t killed all of us because they don’t yet have the ability to do so.

III. The Tyranny Solution

That’s the problem with freedom, in an advanced society. What can be done about it?

a. Targeted restrictions: The most natural thought is that we should tightly control just the really dangerous technologies, the ones that could be used to kill millions of people. So far, that’s worked because there aren’t that many such technologies (esp. nuclear weapons). It may not work in the future, though, when there are more such technologies. It may not be possible to anticipate all the ways in which someone might try to destroy the species, and so we may not know in advance all the things that need to be tightly controlled.

b. Defensive technologies: We’ll build defenses against the main threats. E.g., we’ll build defenses against nuclear weapons, we’ll engineer ourselves to resist genetically engineered viruses, etc. Problem: same as above; we may not be able to anticipate all the threats in advance. Also, defense is generally a losing game. It’s easier and cheaper to destroy things than to protect them. That’s why we have the saying “the best defense is a good offense”.

Example: during the cold war, the Soviets were considering building a missile defense system. (Something Reagan later wanted to do as well.) The U.S. Defense Secretary, Robert McNamara, told them: “That’s fine. If you do that, we will not respond by building our own missile defense system. We will respond by building more missiles, to make sure that we could still overwhelm your defenses.” The reason was that it was cheaper to build n missiles than to build a system that would destroy n missiles. The Soviets gave up on the missile defense idea.

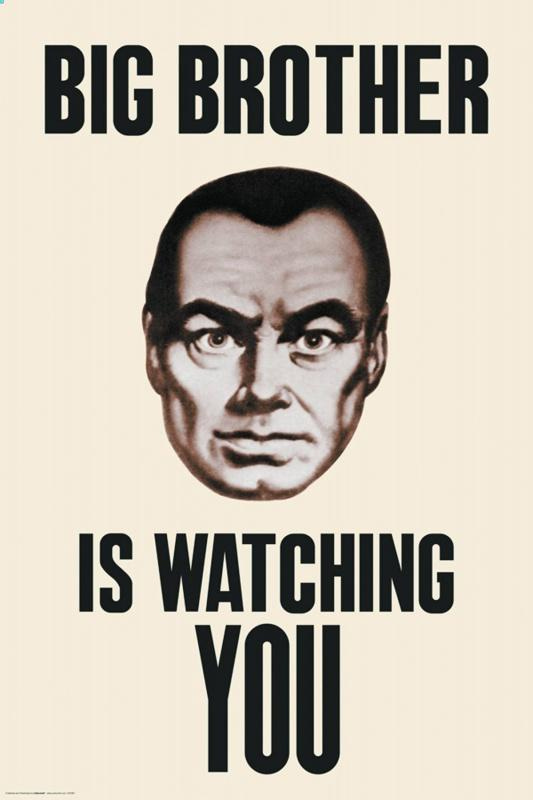

c. Tyranny/the End of Privacy: Maybe in the future, everyone will need to be closely monitored at all times, so that, if someone starts trying to destroy the world, other people can immediately intervene. Sam Harris suggested this in a podcast somewhere. Note: obviously, this applies as well (especially!) to government officials.

d. A better alternative . . . ?

Someone please fill in (d) for me. Thanks.