The Most Important Piece of Knowledge

Feynman’s Question

Suppose that all the knowledge of our civilization was about to be destroyed in some great cataclysm, but we have the opportunity to pass on just one sentence to future generations of people. Assume that some future society will somehow correctly understand the English meaning of this sentence, and they will correctly understand it to be the one item of information that an earlier civilization chose to pass on to them. But you otherwise know nothing about this future society. You’ve been asked to compose the sentence. What should it say?

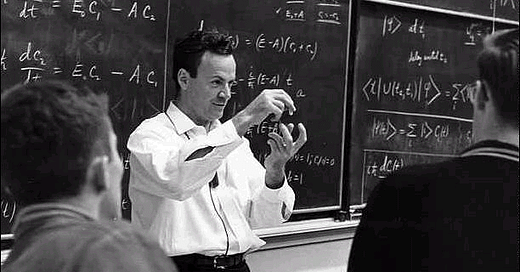

This question comes from Richard Feynman’s lectures on physics (with minor modification by me). Feynman proposed that the one sentence should be a capsule statement of atomic theory:

All things are made of atoms — little particles that move around in perpetual motion, attracting each other when they are a little distance apart, but repelling upon being squeezed into one another.

He goes on to explain how much of modern science is tied to that theory.

You might think that that isn’t a very good candidate for the most important thing to tell future people. If they don’t already have modern science, they’re not going to be able to work out the important implications of that statement, etc.

In fairness, Feynman’s purpose isn’t the same as mine. Feynman was just introducing this hypothetical as a tool for teaching physics — so of course he is going to pick a sentence about physics, and one that gives him the chance to talk about as many important ideas in physics as possible. Fair enough.

But my interest is more like this: What is the most important thing that our civilization has learned? Or, what is the most important idea that we should want to convey to the members of a random human society? (These are not necessarily the same thing, but both are interesting.)

Bad Answers

The question also features in a Radiolab podcast (https://www.wnycstudios.org/podcasts/radiolab/articles/cataclysm-sentence), where a variety of bad answers are proposed by different people. I was quite struck by how terrible some of them were, which made me glad that these people are not actually making this choice.

Some of the answers are harmful absurdities, like the one that says, “There’s no intrinsic value in anything and every action is a futile, meaningless effort.” (Yes, someone proposed that that’s what we should leave to the future.) Others are just useless, like the person who suggested leaving the single-word sentence, “Why?” Others are motivated by parochial concerns, like the guy who thought that racism was the biggest problem in the world (note that most human societies have not had the concept of race), then misdiagnosed racism as being due to fear, then proposed sending the false & misleading information that “The only things you’re innately afraid of are falling and loud noises. The rest of your fears are learned and mostly negligible.”

Anyway, so I started thinking about what might be the most important fact that our society has learned. Before reading on, I suggest taking a minute to think about that question and maybe compose your own answer, which you can share in the comments. ?

Better Answers

Politics

It is tempting to suggest a capsule statement of libertarian political philosophy (e.g., something like, “There is no authority” or “Coercion is only justified to address force and fraud by others” or “Individuals have the right to live as they choose, provided they do not violate the rights of others” — those are of course vague and imprecise, but they’re in the right spirit) — with the idea that this would lead to a generally flourishing society. This society’s freedom and prosperity would also ease the rediscovery of other human knowledge. It’s plausible that the most important thing that humans have needed to learn throughout history is to stop with all the constant coercion. We’re constantly using force against each other at the drop of a hat, and this is the biggest reason why most societies are terrible.

Epistemology

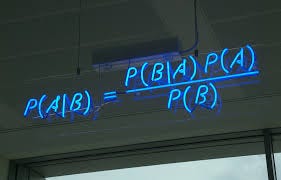

Maybe the best kind of answer is a meta-answer — a statement that is useful because it helps you to discover other useful truths. So maybe it should be some very general, basic statement about epistemology. For instance, maybe we should give the future generations Bayes’ Theorem. It has a trivial proof, but most human societies have not been aware of it, and it plausibly explains most good, non-deductive reasoning, including most scientific reasoning.

Or maybe we want something more general. The most important epistemological knowledge is probably something trivial, but something that a huge number of people have nevertheless rejected. Perhaps something like, “Always be rational” or “Virtue is rationality.” I think this advice & statement are almost trivial, but the vast majority of humans have rejected them.

Or perhaps, “Knowledge derives from reason and observation.” Most human societies have been organized around belief systems that have no rational or empirical basis. The fact that one shouldn’t do that is a reasonable candidate for the most important thing that we humans have learned since the dawn of civilization.

Then I started thinking about other very important, general epistemological lessons. Lessons that most human beings have not gotten, which has led to lots of other errors. So here’s one; this probably wouldn’t be a good single sentence to leave to the future (since it requires further explanation), but it’s still one of the most important facts of epistemology: Your priors are too high.

What I mean by this is that most people, when thinking about any non-obvious question, have a certain systematic bias: we tend to think of one theory or a few theories, and then attribute vastly too-high initial credences to the few possibilities that we think of. Like, orders of magnitude too high. Almost always, the few ideas that you initially think of (about theoretical questions, not concrete day-to-day life questions) are all false. That is the core explanation for why almost all theoretical beliefs humans have held – scientific theories, philosophical theories, religions – are pretty much 100% wrong.

Examples: For over a thousand years, the going theory of “chemistry” in Europe was the theory of the four elements: the world is made of the four elements of Earth, Air, Fire, and Water. That is 100% wrong. None of those things are elements. There is also next to zero evidence for the theory. As far as I can tell, someone came up with the idea some time, and it seemed vaguely plausible, so they just assumed it was true. And then other people for centuries held to it because people before them told them it was true. This sort of thing goes on over and over throughout history. Almost every theory that people held was wrong – and not merely a little bit off but radically, utterly false.

Another example: for centuries, the accepted paradigm of medicine was the theory of the four humours: diseases are caused by imbalances of the body’s four fluids, viz., yellow bile, black bile, blood, and phlegm. That’s also completely wrong. And there is also almost no evidence for it either.

People didn’t realize that you needed a lot of evidence for a theory. It doesn’t work to just come up with something vaguely plausible and then embrace that. And that’s because almost all theories are false (including theories that seem vaguely plausible). When you assign your priors, you should generally start with the base rate. So most theories should get near-zero prior probabilities.

Sometimes, people have had some evidence for their theory, but it was still totally wrong. E.g., in the case of medieval medical theories, doctors probably collected stories about patients who received treatments based on the existing medical theories, and the patients recovered. The experts just didn’t realize that you need a lot more evidence than that. (They also didn’t sufficiently consider the ways in which we are biased, e.g., doctors might be biased toward remembering or reporting cases that went the way they expected.)

Of course, it isn’t that people assign excessive prior probabilities to every proposition. It’s that we assign excessive priors to the theories that we can think of, and a drastically too-low prior to the “none of the above” alternative, i.e., that the true theory is something not yet conceived.

By the way, it isn’t just the benighted ancient and medieval ‘philosophers of nature’ who did this. I think almost all people today have this same bias. It gets counteracted because we get taught the correct scientific theories about a lot of things (thus relying on a different bias, our bias toward accepting what we are taught). But on questions where we are not taught the scientific answer, and the answer also isn’t obvious to casual observation, humans are incredibly unreliable. Because we, again, just come up with something that strikes us as vaguely plausible, and decide that we believe it.

Examples: We’re wondering what causes people to become criminals. We decide that it’s probably because the criminal was abused as a child. That’s the sort of thing that a human would just assume with no evidence, or with only a few anecdotes as evidence. And it might be true. But there are many other possible theories, so one can’t just assume that one.

Even I am tempted by this sort of thing periodically. E.g., I wonder what will happen in the next election. I think of a vaguely plausible explanation for why the President will lose (e.g., Biden is more likeable than Clinton, who came close to defeating Trump in ’16, etc.). Then I’m tempted to go around saying the President will lose. In fact, I have no idea, because there are multiple plausibly relevant factors with unknown weights.

Another way to put the point*: Almost all beliefs require evidence, and they require a lot of it. Way more than you’re thinking.

* Aside: Is that really another phrasing of the same point? Yes. Your final probability for H depends on two things: the prior probability you assign to it before taking account of evidence, and how much evidence you have for it. If you have a low prior, you need more evidence; if you have a high prior, you need less. So the mistake of assigning excessive priors is the same mistake as that of demanding too little evidence.